Multi-Tiered Architecture

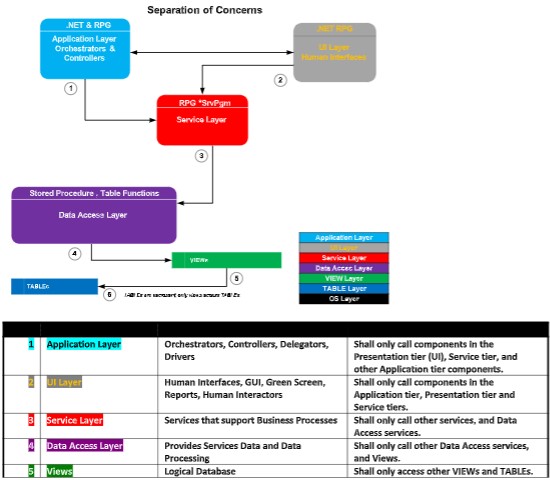

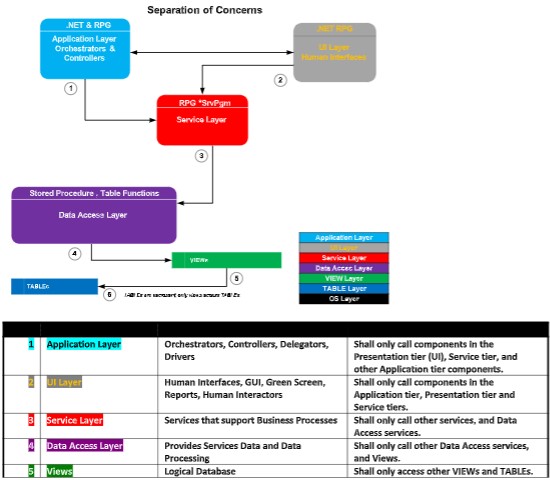

The 6 Tiers

Constructing solutions which map components to a multi-tier architecture (MTA) make solutions very easy to code, maximize shareability, are easy

to assign software components to a team of developers to construct, easy to unit-test and QA, and easy to ramp up others new to that solution. In

a Multi-Tier Architecture (MTA), all components of a software solution are placed in its proper tier, and a separation of concerns (SoC) is enforced.

The following diagram shows the tiers that all components are coded into:

1. Application Tier

2. Presentation Tier

3. Service Tier

4. Database Tier: (a) Data-Access, (b) Views, (c) Tables

These are the 6 layers which make up the Multi-Tier Architecture.

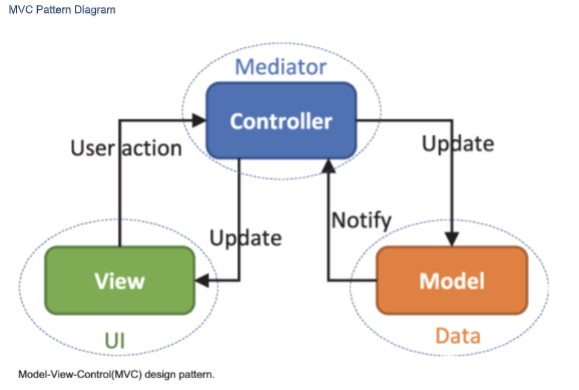

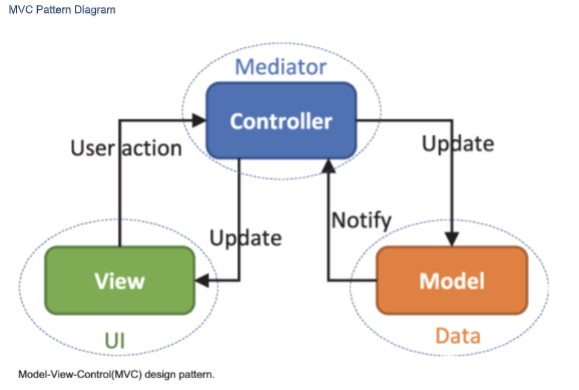

MVC

MVC is less an architecture, and more a design pattern. MVC is a loose abbreviation of the Multi-Tier Architecture (MTA) shown above, whose tiers

can be distilled down to MVC. MVC stands for Model-Viewer-Controller, and it basically takes the 6 tiers of the MTA and translates them into just

3:

1. Model

2. Viewer

3. Controller

Model

The Model refers to the Database, and includes the 3 subparts: Data-Access, Views and Tables.

Viewer

The Viewer are human interfaces such as UI’s, GUI’s, printed form output, emails, PDF’s, and such, but a viewer can also be a program listening for

a message.

Controller

The Controller is the program code base, the logic that ties everything together.

MVC Pattern Diagram

These are the 6 layers which make up the Multi-Tier Architecture.

MVC

MVC is less an architecture, and more a design pattern. MVC is a loose abbreviation of the Multi-Tier Architecture (MTA) shown above, whose tiers

can be distilled down to MVC. MVC stands for Model-Viewer-Controller, and it basically takes the 6 tiers of the MTA and translates them into just

3:

1. Model

2. Viewer

3. Controller

Model

The Model refers to the Database, and includes the 3 subparts: Data-Access, Views and Tables.

Viewer

The Viewer are human interfaces such as UI’s, GUI’s, printed form output, emails, PDF’s, and such, but a viewer can also be a program listening for

a message.

Controller

The Controller is the program code base, the logic that ties everything together.

MVC Pattern Diagram

We will code to the MTA’s 6 tiers because it provides more granularity for all the components which make up a solution.

Concrete and Abstract

Looking at the MTA diagram above, starting at the bottom tier, logic and data is very concrete and as you move up the tiers logic and logic & data

must get more and more abstract.

The term concrete refers to logic that does the actual heavy lifting, does the detailed calculating, defines business rules in detail, and its where the

work for a particular task is actually done. In software, the concrete code is where the rubber meets the road.

Now expanding upon this concrete/abstract thinking, the very top tier of the MTA diagram is the Application Tier. The components referenced at

this tier are very abstract and they delegate the actual processing to more concrete services found in the tiers below.

In this way, the components in the upper Tiers do no heavy lifting, not much logic, as they only call a bunch of services which abstract the business

functions to components in the upper tiers. The components adhere to a strict Separation of Concerns (SoC) when calling services in the lower

tiers. At the top, Application-tier Components only care about something getting done, the what, and they do not care about how it gets done (the

how). This delegation of functionality is at the heart of MTA and is what is meant by Separation of Concerns (SoC).

Put another way, components in one tier are forbidden to call/directly access components in a higher tier, but they can call components in the

next tier below.

Abstract services delegate processing to lower tier which contains more concrete services.

We must not place concrete logic in components in the higher tiers. In short, all tasks must be delegated down.

Example of Components Working Together

Let’s say that you have a component in the Application Tier which needs to get a Customer Address to present on a GUI. Let’s call this application

component CustomerGUI(). Now you could simply put embedded SQL in that Application Component to query the Customer Master table to get

the Customer Address, and this would work. But it’s a really bad, traditional, monolithic and legacy way to do things and for many reasons which

will become clearer as you continue reading this document.

Placing concrete code where it does not belong (higher tiers) is a violation of the MTA and Separation of Concerns (SoC).

A far better way would be to code a new service in the service tier called returnCustomerAddress() and call it from the component in the

application layer CustomerGUI(). returnCustomerAddress() would call a component in the Data Access Tier called

returnCustomerAddress() which might return an entire row from the Customer Master VIEW, which is built over the Customer Master TABLE.

The service component returnCustomerAddress() would simply return the Customer Address value to the application caller CustomerGUI().

So here would be the calling structure, and I color coded it to match the Tiers of the MTA diagram above:

CustomerGUI()

returnCustomerAddress()

returnCustomerMasterRow()

CustoemrMasterPrimaryVIEW

CustomerMasterTable

Code to Name Spaces

Now you could also have done it the following way, and it would also work, but it would not be the best way:

CustomerGUI()

returnCustomerMasterRow()

CustomerMasterPrimaryVIEW

CustomerMasterTable

The call structure does not make it obvious that the application CustomerGUI() needs the Customer Address from the direct call to the DAL

component returnCustomerMasterRow(). Shown above, a call is made to returnCustomerMasterRow() and the Customer Address is cherry picked

from the returned values.

In the first example, the service tier component returnCustomerAddress() was called, and its name makes it exceedingly clear that the Customer

Address is what is returned to the application CustomerGUI(). And that is what we are going for: being exceedingly clear as one reads the code.

The use of returnCustomerAddress() allows us to code to a Name Space. One reading the code, reads a call to returnCustomerAddress(), which is

far more clear of intent than a call to returnCustomerMasterRow() is.

Don’t worry if the coding seems excessive, or not taking advantage of the DAL code directly (sharing), because coding to Name Spaces, where the

Name describes what the call specifically does calls the DAL component returnCustomerMasterRow() under the covers anyways, and there is no

measurable cost in performance, but the readability is greatly improved. That means less bugs, easier to support, easier to understand!

Too Many Reads of Same Row is Inefficient?

Is it? What if your code needs Customer Address and Customer Balance? Create two services that both call returnCustomerMasterRow()? This

seems too inefficient because it seems the customer row will be read twice, right? And everyone knows a developer must minimize the number of

reads and writes to the database for fastest execution times, right?

Wrong!

The first call of returnCustomerMasterRow() would pay the cost in time of the system fetching the required customer master row. Subsequent

fetches of that same customer master row are from the cache, which is in RAM memory, and fetches of data from the cache are very cheap in time

and resources. This is a small price for making the code very readable, making the intentions very obvious. In other words, a 2 nd call to the same

row is cheap because that row was placed in RAM by the first read.

Tier to Tier Component Calls – What is Allowable?

And because components are forbidden to call components in higher Tiers or skip Tiers, the following call structure would not be allowable:

returnCustomerAddress()

CustomerGUI()

CustomerMasterTable

returnCustomerMasterRow()

CustomerMasterPrimaryVIEW

CustomerMasterTable

In other words, callers are limited to calling/referencing components in the same or next lower Tier.

Let’s discuss this MTA diagram starting at the bottom tier and working our way up (going from concrete to abstract).

The Tiers

Database Tier - Purple

The Database Tier is made up of 3 sub-layers:

1. Data-Access Layer

2. View Layer

3. Table layer

Table Layer

At the bottom of the Database Tier is the Table layer, and this is where all tables are placed. The only components that can reference these tables

directly are SQL VIEWs in the View layer right above the Table layer. Components in other layers are not allowed to reference tables directly.

These tables have Constraints defined to them and this is where a lot of the business logic is enforced, as well as references between tables.

There are 5 types of constraints:

1. NOT NULL

2. DEFAULT

3. CHECK

4. PRIMARY KEY (referential integrity)

5. FOREIGN KEY (referential integrity)

DB2 INDEXes can be placed over TABLEs to speed up row access, however one must be mindful to not build too many or too few because too many

can slow down INSERTs, UPDATEs and DELETEs and too few can slow down row access.

All TABLEs must be normalized, and this means nearly all of them must have defined two types of Primary Key, the first being a Natural PRIMARY

KEY and the 2 nd is an Unnatural PRIMARY KEY (IDENTITY column) and doing this will satisfy Normal Form (1NF). More on normaliztion later in this

document.

View Layer

The View layer is right above the Table Layer, and as you may guess the View Layer is made up of SQL VIEWs which are built over the components

in the Table layer. These VIEWs are the only components in the MTA that are allowed to access the components in the Table layer directly. Each

TABLE has a PRIMARY VIEW defined to it, and it is this VIEW that is used for INSERTs, UPDATEs, and DELETEs for a TABLE. In this way, such

operations are not allowed to happen directly to a TABLE.

SQL VIEWs abstract TABLEs.

SQL VIEWs are custom interfaces into TABLEs and are created as required by an application. There can never be too many VIEWs because the

number of VIEWs does not slow down processing on a server the same way that too many Logical Files or INDEXes will. This is because VIEWs are

not maintained by the system if they are not materialized (open), whereas Logical Files and INDEXes in nearly all case are maintained even if they

are not open, and this maintenance can slow system response-time during I/O (INSERTs, UPDATEs, DELETEs).

Data-Access Layer

Data-Access Layer (DAL) components are programs that extract (SELECT queries), INSERT, UPDATE, and DELETE row data from components in the

View Layer. The components in the DAL are the only ones allowed to access components in the View layer directly.

These DAL components abstract components in the View layer.

DAL components can be written as RPG sub-procs, or native DB2 Stored Procedures, DB2 User Defined Functions (UDF), or DB2 TABLE Functions

(UDTF).

DAL components which return data from VIEWs should often be coded to return a page of rows at a time, and this page of rows can be an array or

CURSOR. This keeps the execution speed of DALs very fast. In other words, a DAL component must almost never return an entire result-set to a

caller.

All DAL components are coded to a Namespace (to be defined soon).

Components in the DAL are the only ones which can have raw SQL statements. It is very important that SQL statements are not strewn across

all the other tiers of the MTA.

Services Layer

Components in the service layer provide functionality for the application, presentation and other service layer components. Components in the

service layer get data, and process data through calls to components in the Data-Access Layer (DAL).

Components in the Service Layer are the only ones which can call components in the DAL.

Components in the Service Layer must not contain any raw SQL statements.

All Service components are coded to a namespace (more on this later).

Presentation Layer

The Presentation Layer contains components which provide human-readable (human-interfaced) input or output such as these:

1. UIs

2. Emails

3. Texts

4. GUIs

5. Green Screens

6. Printed Reports

These components get their data and data processed by calling components in the Service Layer.

The Presentation Layer must not have any raw SQL.

Presentation components provide humans an abstract view of the application.

Presentation type components must only be placed in the Presentation Layer.

Application Layer

The Application Layer contains components which provide the highest level of functionality, and for this reason they are often called from menus

which end-users use, from drivers, orchestrators, as web services, and command lines, but they can also be called by other programs in the

application layer.

Application Layer components control, delegate, orchestrate, and contain little other logic. Application Layer components mostly just call

components in the service and presentation tiers, delegating functionality to components in those tiers. Delegation will be discussed later in this

document.

Application Layer components can only call components from the Presentation, and Service layers.

Components in the Application Layer must not have any raw SQL statements.

Tying it All Together

The colors in this diagram correspond to those on the MTA diagram, so refer to the MTG diagram when reading this section.

We will code to the MTA’s 6 tiers because it provides more granularity for all the components which make up a solution.

Concrete and Abstract

Looking at the MTA diagram above, starting at the bottom tier, logic and data is very concrete and as you move up the tiers logic and logic & data

must get more and more abstract.

The term concrete refers to logic that does the actual heavy lifting, does the detailed calculating, defines business rules in detail, and its where the

work for a particular task is actually done. In software, the concrete code is where the rubber meets the road.

Now expanding upon this concrete/abstract thinking, the very top tier of the MTA diagram is the Application Tier. The components referenced at

this tier are very abstract and they delegate the actual processing to more concrete services found in the tiers below.

In this way, the components in the upper Tiers do no heavy lifting, not much logic, as they only call a bunch of services which abstract the business

functions to components in the upper tiers. The components adhere to a strict Separation of Concerns (SoC) when calling services in the lower

tiers. At the top, Application-tier Components only care about something getting done, the what, and they do not care about how it gets done (the

how). This delegation of functionality is at the heart of MTA and is what is meant by Separation of Concerns (SoC).

Put another way, components in one tier are forbidden to call/directly access components in a higher tier, but they can call components in the

next tier below.

Abstract services delegate processing to lower tier which contains more concrete services.

We must not place concrete logic in components in the higher tiers. In short, all tasks must be delegated down.

Example of Components Working Together

Let’s say that you have a component in the Application Tier which needs to get a Customer Address to present on a GUI. Let’s call this application

component CustomerGUI(). Now you could simply put embedded SQL in that Application Component to query the Customer Master table to get

the Customer Address, and this would work. But it’s a really bad, traditional, monolithic and legacy way to do things and for many reasons which

will become clearer as you continue reading this document.

Placing concrete code where it does not belong (higher tiers) is a violation of the MTA and Separation of Concerns (SoC).

A far better way would be to code a new service in the service tier called returnCustomerAddress() and call it from the component in the

application layer CustomerGUI(). returnCustomerAddress() would call a component in the Data Access Tier called

returnCustomerAddress() which might return an entire row from the Customer Master VIEW, which is built over the Customer Master TABLE.

The service component returnCustomerAddress() would simply return the Customer Address value to the application caller CustomerGUI().

So here would be the calling structure, and I color coded it to match the Tiers of the MTA diagram above:

CustomerGUI()

returnCustomerAddress()

returnCustomerMasterRow()

CustoemrMasterPrimaryVIEW

CustomerMasterTable

Code to Name Spaces

Now you could also have done it the following way, and it would also work, but it would not be the best way:

CustomerGUI()

returnCustomerMasterRow()

CustomerMasterPrimaryVIEW

CustomerMasterTable

The call structure does not make it obvious that the application CustomerGUI() needs the Customer Address from the direct call to the DAL

component returnCustomerMasterRow(). Shown above, a call is made to returnCustomerMasterRow() and the Customer Address is cherry picked

from the returned values.

In the first example, the service tier component returnCustomerAddress() was called, and its name makes it exceedingly clear that the Customer

Address is what is returned to the application CustomerGUI(). And that is what we are going for: being exceedingly clear as one reads the code.

The use of returnCustomerAddress() allows us to code to a Name Space. One reading the code, reads a call to returnCustomerAddress(), which is

far more clear of intent than a call to returnCustomerMasterRow() is.

Don’t worry if the coding seems excessive, or not taking advantage of the DAL code directly (sharing), because coding to Name Spaces, where the

Name describes what the call specifically does calls the DAL component returnCustomerMasterRow() under the covers anyways, and there is no

measurable cost in performance, but the readability is greatly improved. That means less bugs, easier to support, easier to understand!

Too Many Reads of Same Row is Inefficient?

Is it? What if your code needs Customer Address and Customer Balance? Create two services that both call returnCustomerMasterRow()? This

seems too inefficient because it seems the customer row will be read twice, right? And everyone knows a developer must minimize the number of

reads and writes to the database for fastest execution times, right?

Wrong!

The first call of returnCustomerMasterRow() would pay the cost in time of the system fetching the required customer master row. Subsequent

fetches of that same customer master row are from the cache, which is in RAM memory, and fetches of data from the cache are very cheap in time

and resources. This is a small price for making the code very readable, making the intentions very obvious. In other words, a 2 nd call to the same

row is cheap because that row was placed in RAM by the first read.

Tier to Tier Component Calls – What is Allowable?

And because components are forbidden to call components in higher Tiers or skip Tiers, the following call structure would not be allowable:

returnCustomerAddress()

CustomerGUI()

CustomerMasterTable

returnCustomerMasterRow()

CustomerMasterPrimaryVIEW

CustomerMasterTable

In other words, callers are limited to calling/referencing components in the same or next lower Tier.

Let’s discuss this MTA diagram starting at the bottom tier and working our way up (going from concrete to abstract).

The Tiers

Database Tier - Purple

The Database Tier is made up of 3 sub-layers:

1. Data-Access Layer

2. View Layer

3. Table layer

Table Layer

At the bottom of the Database Tier is the Table layer, and this is where all tables are placed. The only components that can reference these tables

directly are SQL VIEWs in the View layer right above the Table layer. Components in other layers are not allowed to reference tables directly.

These tables have Constraints defined to them and this is where a lot of the business logic is enforced, as well as references between tables.

There are 5 types of constraints:

1. NOT NULL

2. DEFAULT

3. CHECK

4. PRIMARY KEY (referential integrity)

5. FOREIGN KEY (referential integrity)

DB2 INDEXes can be placed over TABLEs to speed up row access, however one must be mindful to not build too many or too few because too many

can slow down INSERTs, UPDATEs and DELETEs and too few can slow down row access.

All TABLEs must be normalized, and this means nearly all of them must have defined two types of Primary Key, the first being a Natural PRIMARY

KEY and the 2 nd is an Unnatural PRIMARY KEY (IDENTITY column) and doing this will satisfy Normal Form (1NF). More on normaliztion later in this

document.

View Layer

The View layer is right above the Table Layer, and as you may guess the View Layer is made up of SQL VIEWs which are built over the components

in the Table layer. These VIEWs are the only components in the MTA that are allowed to access the components in the Table layer directly. Each

TABLE has a PRIMARY VIEW defined to it, and it is this VIEW that is used for INSERTs, UPDATEs, and DELETEs for a TABLE. In this way, such

operations are not allowed to happen directly to a TABLE.

SQL VIEWs abstract TABLEs.

SQL VIEWs are custom interfaces into TABLEs and are created as required by an application. There can never be too many VIEWs because the

number of VIEWs does not slow down processing on a server the same way that too many Logical Files or INDEXes will. This is because VIEWs are

not maintained by the system if they are not materialized (open), whereas Logical Files and INDEXes in nearly all case are maintained even if they

are not open, and this maintenance can slow system response-time during I/O (INSERTs, UPDATEs, DELETEs).

Data-Access Layer

Data-Access Layer (DAL) components are programs that extract (SELECT queries), INSERT, UPDATE, and DELETE row data from components in the

View Layer. The components in the DAL are the only ones allowed to access components in the View layer directly.

These DAL components abstract components in the View layer.

DAL components can be written as RPG sub-procs, or native DB2 Stored Procedures, DB2 User Defined Functions (UDF), or DB2 TABLE Functions

(UDTF).

DAL components which return data from VIEWs should often be coded to return a page of rows at a time, and this page of rows can be an array or

CURSOR. This keeps the execution speed of DALs very fast. In other words, a DAL component must almost never return an entire result-set to a

caller.

All DAL components are coded to a Namespace (to be defined soon).

Components in the DAL are the only ones which can have raw SQL statements. It is very important that SQL statements are not strewn across

all the other tiers of the MTA.

Services Layer

Components in the service layer provide functionality for the application, presentation and other service layer components. Components in the

service layer get data, and process data through calls to components in the Data-Access Layer (DAL).

Components in the Service Layer are the only ones which can call components in the DAL.

Components in the Service Layer must not contain any raw SQL statements.

All Service components are coded to a namespace (more on this later).

Presentation Layer

The Presentation Layer contains components which provide human-readable (human-interfaced) input or output such as these:

1. UIs

2. Emails

3. Texts

4. GUIs

5. Green Screens

6. Printed Reports

These components get their data and data processed by calling components in the Service Layer.

The Presentation Layer must not have any raw SQL.

Presentation components provide humans an abstract view of the application.

Presentation type components must only be placed in the Presentation Layer.

Application Layer

The Application Layer contains components which provide the highest level of functionality, and for this reason they are often called from menus

which end-users use, from drivers, orchestrators, as web services, and command lines, but they can also be called by other programs in the

application layer.

Application Layer components control, delegate, orchestrate, and contain little other logic. Application Layer components mostly just call

components in the service and presentation tiers, delegating functionality to components in those tiers. Delegation will be discussed later in this

document.

Application Layer components can only call components from the Presentation, and Service layers.

Components in the Application Layer must not have any raw SQL statements.

Tying it All Together

The colors in this diagram correspond to those on the MTA diagram, so refer to the MTG diagram when reading this section.

MTA is a Good Fit for Agile Teams

Coding to a modern MTA provides a lot of benefits to the Agile/Scrum/Kanban frameworks for project workflow:

1. Because everything is a service, construction of each service can be assigned to each team developer.

2. Coders of services do not have to know the big-picture to code their microservice, so ramp up does not require discussion of the entire

solution.

3. Construction of various components of a solution can be done concurrently (not serially).

4. Team developers exchange contract (calling parameters) definitions so that they know how to call each other’s services.

5. Placing a call to a service not yet completely code is possible because a shell of the service is quickly made available for calling long before it

is completely code. This shell defines the contract, and this is what makes this possible.

6. Unit testing of each microservice can be done before it is called by other programs.

7. Unit testing goes faster.

8. QS testing goes faster.

9. Story assignment can be done along component lines.

10. Code tends to be higher in quality.

11. Team management is a lot easier.

12. Ramping up of new teammates is faster, easier.

Enforcement of Standards, Methods, and Business Logic

As the catalog of microservices and APIs grows, and when developers are told to first utilize those existing, a benefit is that standards, methods,

and business logic pre-defined in existing APIs are available to inflight and future projects. You might call this Management by API.

The thinking here is that developers that utilized existing APIs are more likely to adhere to coding in expected ways, and are less likely to re-invent

the wheel, or introduced strange and rogue logic to the codebase.

MTA is a Good Fit for Agile Teams

Coding to a modern MTA provides a lot of benefits to the Agile/Scrum/Kanban frameworks for project workflow:

1. Because everything is a service, construction of each service can be assigned to each team developer.

2. Coders of services do not have to know the big-picture to code their microservice, so ramp up does not require discussion of the entire

solution.

3. Construction of various components of a solution can be done concurrently (not serially).

4. Team developers exchange contract (calling parameters) definitions so that they know how to call each other’s services.

5. Placing a call to a service not yet completely code is possible because a shell of the service is quickly made available for calling long before it

is completely code. This shell defines the contract, and this is what makes this possible.

6. Unit testing of each microservice can be done before it is called by other programs.

7. Unit testing goes faster.

8. QS testing goes faster.

9. Story assignment can be done along component lines.

10. Code tends to be higher in quality.

11. Team management is a lot easier.

12. Ramping up of new teammates is faster, easier.

Enforcement of Standards, Methods, and Business Logic

As the catalog of microservices and APIs grows, and when developers are told to first utilize those existing, a benefit is that standards, methods,

and business logic pre-defined in existing APIs are available to inflight and future projects. You might call this Management by API.

The thinking here is that developers that utilized existing APIs are more likely to adhere to coding in expected ways, and are less likely to re-invent

the wheel, or introduced strange and rogue logic to the codebase.

Attributes of Modern Software

Placing the components of a solution in their proper MTA tier is essential, but unless the components are coded in the best ways, the solution

cannot be perceived as modern and the quality of such code will be suspicious, hard to maintain, low in shareability, hard to test, and hard to learn.

The following sections will discuss the attributes of modern software in detail.

Program Code Decoupled from Database

One of the best features of MTA is the decoupling of the database to the programming code. This means that the components in the higher tiers

do not need to know the structure of the database tables, nor the organization of the database. This is accomplished by placing Data-Access Layer

components between the programming code and the database.

In this way, the DAL components abstract the database to the programming code in the higher tiers. Since components in the higher tiers Service,

Presentation and Application get their data and data processed from calls to components in the service layer, they do not need to know much

about the database.

See the disconnect here? The decoupling?

Benefits of Decoupling the Program Code from the Database

1. The incidences of table level-checks is greatly minimized if not removed

2. The amount of code that must be recompiled after database changes is greatly minimized

3. Only the code in the DAL is susceptible to the potential need for recompiles after database changes and this impact is minimal

4. Developers writing code in the Application, Presentation, and Service Layers do not need to know much about the Database

5. Replacing tables or renaming tables only effect views which maintain static interfaces, minimally effecting programming code in higher tiers

The components in the VIEW layer abstract the tables to components in the data-access layer.

Components in the DAL remove the need of components in higher tiers to use SQL to get data and get data processed.

Loosely Coupled Components

The components across all the tiers of an MTA are said to be loosely coupled if each component is stand-alone, contract-enforcing, domain-

agnostic, independent, stateless, and self-contained which means that they each do not directly rely on states and resources kept in other

components to execute.

In other words, there are no tentacles or wiring between components which are relied upon for each to execute properly, as would be the case in

the dreaded traditional, monolithic and tightly coupled ways of coding software.

The Opposite is Tightly Coupled

Tightly couple solutions blur the lines between what each component does, their purposes, and their functionality crosses the borders of an MTA.

No Separation of Concerns (SoC) is enforced. In such solutions, components communicate with each other in ways outside of just their parameter

lists so there is no well-defined contract. States of one are maintained in one or more other components, and states are often remembered

between calls between such tightly coupled components. Such components are too specific to a particular application, function, process or

domain. Such components are too interrelated, too dependent upon others, and pieces of logic one component is responsible for is often found in

others. A separation of concerns is not maintained. Subroutines are often heavily used, which rely on shared global resources.

If a company were tightly coupled, one of the tasks for a Janitor would be to maintain the General Ledger, one of the tasks of the CEO would be to

sweep the floors, and the order-entry staff would be tasked with taking orders, picking those orders from the warehouse and helping drive the

delivery trucks. Tightly Coupled systems make for a real mess because lines of responsibility are blurred, not well defined, and there is no

separation of concerns (SoC).

In short, tightly coupled solutions make for a complicated spider web of messy tentacles of logic across many parts of the code, making testing,

upgrading, sharing, supporting, ramping up personnel and debugging difficult.

Components Relate to Each other by Contract Only

Another way to describe loosely-coupled components is that such components relate to each other solely through a contract. A contract is a term

which refers to interfaces between components, such as calling parameters, both input (request parameters) and output (response parameters).

Because components communicate with each other only by Contract, this means the relations between them are clean, and well defined.

Loosely-Coupled Components Must exist in Correct Tier

For a solution to be truly loosely coupled, separation of concerns must be enforced, which means that each component must be placed in the

correct Tier, and secondly the only way loosely-coupled components communicate with each other is via their contracts (calling parameters).

Loosely Couple Solutions are Not Necessarily Application/Domain Specific

For example, if a service must be created to administrate MQ high-speed ques, it is coded in such a way that it could be a solution for any

application or domain requiring MQ services. So, this solution (especially if it’s in the service layer) might work for applications having to do with

Healthcare, Trucking, Banking, Retail and any application or domain. So, let’s say this loosely coupled solution is to support healthcare claims

processing. If a business rule for a particular request MQ Que is to be defined in the code, it should NOT be defined in the code that makes up the

MQ Administration services. Such a business rule would be placed in a different layer, probably the Database layer. In this way, tentacles between

the service layer and the application layer must never exist, thus Loosely Coupled.

Loosely Coupled in Summary

A Loosely Coupled system is made up of stand-alone components. This means each component contains all the resources and means of doing a

specific task. Additional data and sub-tasks it requires are received/performed via a call to another component and the only way these two

components communicate is via their contracts. The states of each component are forgotten between calls to it, so it is said to be stateless.

Separation of Concerns

The term Separation of Concerns (SoC) has to do with keeping the concerns of each component focused on their one thing that they do. In this way

each service contains logic that supports just that one task that they are required to do, and such services are given a namespace (more on

namespaces soon). There is the danger of that one concern creeping into one or more other concerns, and this is something we want to prevent

from happening.

In the context of software, SoC must be maintained at several levels:

- MTA Tier

- Program

- Sub-Proc

Some examples

1. A component that is responsible for extracting data from the database must not be placed in any other Tier except the Data Tier (specifically

the Data-Access-Layer).

2. A component that provides a GUI interface does not belong in the Service Layer.

3. A sub-proc that returns Customer Name must not also return Customer Address.

4. An Application component must not get the Customer Address via embedded SQL statement.

5. A component that is responsible for updating Table X does not also delete rows from Table Y.

SoC can also apply to non-software structures:

1. An QA analyst probably is not asked to code a program.

2. The CIO normally does not QA software.

3. A Developer is not responsible for defining the Requirement.

4. A C-Level manager does not define the Contract (parameters) between two components.

5. The Scrum Master usually does not perform code reviews.

6. The Business Analyst does not generally sit in developer code design sessions and make those decisions.

7. The Product Owner probably will not design the code architecture.

Services and Need to Know Basis

When calling a service via its contract, one must never pass to it more or less information than that service requires. In other words, the service

must only require parameter data it needs to function, no more, no less, and return only what is required of it.

Don’t Plan Ahead!

Do not be tempted to plan-head by passing in more parameters than are required because you think you’ll need that information in the future.

While this may sound wise, in modern development this is a big no-no. Only add additional parameters at the time they are needed, not before.

Justify each and every parameter which makes up a service contract.

DO NOT PLAN AHEAD! CODE SERVICES WITH WHAT YOU KNOW TODAY.

Modular in Structure

One if the keystones of modern software construction is “write once, call from everywhere”, something I stole from what has been previously said

about Java. On IBM i we can do the same thing. To make our code sharable, it must be modular, but modular in the right way. In past decades,

modular meant a program with a lot of subroutines, but today that does not go far enough.

This is not to say that we should not use subroutines. They still have their place, but in these modern times, we use them a lot less. These days the

reason for subroutines is to take a block of mundane code and move it to a subroutine, to remove clutter, bulk, making the main code more

readable. However, if that logic to be placed in a subroutine has share-value, if that logic that could be leveraged elsewhere, then it should be

placed in a sub-procedure (sub-proc), and it must be decided if that sub-proc should be exported or private.

Here are some good use-cases for placing code in a subroutine:

- Many eval statements that move data from a large DS to DSPF output fields.

- Logic that checks what optional input parameters were passed into a called sub-proc.

- The need to initialize many fields.

- Placing partitioned logic of a particular sub-task and which should not be shareable.

- Placing mundane logic for IF, DO UNTIL, and DO WHILE, CASE, and SELECT program structures.

Basically, anytime you have a lot of mundane code that if moved into a subroutine would improve code readability this is reason enough and

provided that logic is not a good candidate for sharing.

Are Sub-Procs like Subroutines? They are not. Let’s see why in this table:

Subroutines vs Sub-Procs

Container Type Pros Cons

=====================================================================================================================================================================

Subroutine Cluttering logic can be placed in a subroutine to improve Cannot be shared by other procedures and programs.

code readability.

All resources are global to a subroutine. This is a con too: All resources are global to a subroutine.

Cannot define resources hidden (encapsulated) within a subroutine.

The code inside a subroutine is visible to the code outside the subroutine.

No ability to define parameters.

Calls to Sub-Procs are a tiny bit slower than calls to Recursive calls are not allowed for subroutines.

subroutines, but as time marches on, this difference in

execution speed is becoming imperceptible.

Code in subroutines allow calls from other parts of the Subroutines cannot be used as functions, as they do not return anything.

program. Not obvious what the inputs are.

Not obvious what the outputs are.

Not obvious what value it returns.

Not obvious what the code does.

Subroutines cannot be shared outside the procedure they reside in.

Can be called from many places within the procedure the Calling parameters (contract) cannot be defined and enforced for a subroutine.

subroutine is in.

--------------------------------------------------------------------------------------------------------------------------------------------------------------------

Sub-Proc Cluttering logic can be placed in a Sub-Proc to improve code Calls are to Sub-Procs are a tiny bit slower than calls to subroutines, but

readability as time marches on, this difference in execution speed is becoming

imperceptible.

Can be called by other Sub-Procs in the same module, and if

exported can be called by other programs.

Local resources can be defined to the Sub-Proc and only

visible to the Sub-Proc.

Resources inside a Sub-Proc can be made stateless.

Logic inside a Sub-Proc is encapsulated and not visible to

code outside the Sub-Proc.

Input and output calling parameters can be defined for Sub-

Proc

--------------------------------------------------------------------------------------------------------------------------------------------------------------------

Procs Local resources are automatically initialized each time a Sub-

Proc is called.

Compile-time errors are flagged for programs calling Sub-

Procs to insure calling parameters are the correct type.

Recursive calls to a Sub-Proc are allowed.

Sub-Procs can be used as functions, as they can return a

typed value outside its parameter list.

The calling parameters for a Sub-Proc can be defined as

input-only, output, and input-output, and they enforce types

at compile-time.

Can be exposed to callers outside the *SrvPgm the Sub-Proc

lives in.

Logic can be encapsulated (hidden) inside a sub-proc.

The default for sub-proc resources is stateless, but using the

keyword STATIC a resource can be made stateful.

Calls to a sub-proc can be made from IF, DOU, and DOW

statements.

Because there are many pros for using Sub-Procs, we must prefer them over subroutines, and use them heavily. The use of subroutines should be

sparingly and for the few reasons listed above this table of pros and cons. If you are not sure, error on the side of using a Sub-Proc.

Types of Sub-Procedures

There are two types of Sub-Procedures:

1. Helper Services which are not exported (not exposed, private) and are private to the module they’re found in.

2. Exported Services (exposed)

Helper Sub-Procs

When coding a Sub-Proc (aka service, method, function, microservice), the developer needs to determine who can call it. Sometimes a Sub-Proc is

there only to support the exposed Sub-Procs in a service program. Such Sub-Procs are local only to the service program they are in and these are

referred to as Helper Sub-Procs.

Such Sub-procs are not exported (exposed) to outside callers because if they were, it could be dangerous to data-integrity. In other words, the Sub-

Procs allowed to call such Helpers call the Helpers within a certain context, and the type of context that outside callers would not or cannot

consider.

Here are some examples of Helper Sub-Procs:

- A helper that unconditionally updates a table.

- A helper that unconditionally calls an important updating process.

- A helper that unconditionally calls a web service that POSTs, PUTs, or DELETEs.

- The context of a call to a helper service is that the helper is called only from services within the same module.

- A helper which contains logic exceedingly specific to the exposed services calling it (low share-value).

Such helpers are called under the context of being safe to call because the callers validated things, making sure that it is safe to call the helper first.

If these helpers were exposed, there would not be any assurance that outside callers would first make sure it was safe to call that Sub-Proc.

Not all Sub-Procs are to be exposed, so do not expose all Sub-Procs as a general policy, unless you can justify their exporting.

Exposed Sub-Procs

Exposed (exported) Sub-Procs allow calls from other programs and procedures locally and outside of a service program.

When coding exported Sub-Procs, one needs to be mindful of how it will be called, the context of the call.

For example, if an exposed Sub-Proc will update the database, then it must first validate the input parameter values passed to it when called. It

must never assume the caller has previously validated the data.

The thinking here is, a Sub-Proc cannot force callers of it to first validate and make sure the call is safe, however the exposed Sub-Proc must

assume the call may not be safe and for this reason the exposed Sub-Proc must perform whatever validations are required before effecting the

database. Does this make for a lot of I/O? Yes, but the safety this provides is well worth it, and besides, those extra I/O are often from the cache,

so who cares? ;-)

In summary, exported Sub-Proc functionality is conditional (validations of contract), and helper Sub-Proc functionality could also be conditional,

but often does not need to be.

All Services Assigned a Name Space

One of the most helpful things you can put in your code is to make sure that the names of the services you are calling say exactly what the call is

going to do, and those names should not be abbreviated, nor too short (names can never be too long).

Naming Sub-Procs, functions, services in a way that matches the name with the functionality is to give the called service a Name Space. Accurate

namespace minimizes ambiguity about what a service does. Doing this allows your code to be more self-commenting, and a lot easier to read and

for others, even non-developer types to understand. Doing this makes it exceedingly obvious what it is your code is doing.

For example, a service called returnCustomerAddress() must return a customer’s address and no more and no less. Its functionality must match its

name 100%. Stay away from abbreviations like rtnCustAddr(), or returnCustAddr() or similar.

Here is an example:

Let’s say your program is a UI and you need to put a Customer’s Name on the UI panel. You know of an existing service called

returnCustomerInformation() that returns many things about a customer. If your program calls this service, you can cherry pick the Customer

Name from the returned data. And this would work perfectly for your need.

But there is a better way to do this; a way that makes it more obvious what it is your code is doing. It would be far better for you code to call a

service called returnCustomerName(), and inside the code for returnCustomerName() there is a call to returnCustomerInformation(). The name

returnCustomerName is the assigned Name Space for its functionality. In this way, returnCustomerName() wraps the functionality of

returnCustomerInformation() making it exceedingly obvious what the caller is trying to accomplish when it calls returnCustomerName().

Name Spaces are often assigned to functionality with a service that wraps another more general service.

In this way of thinking, the following services wrap returnCustomerInformation():

- returnCustomerName()

- returnCustomerAddress()

- returnCustomerBalance()

- returnCustomerType()

Such wrapper type Sub-Procs are often coded local to the caller, but could be coded in the same service program the wrapped service is found in.

And if a wrapper is coded in the same service program as the wrapped Sub-Proc, then one could make the wrapped Sub-Proc a helper and not

exported. This is situation-depending.

The result of coding to Name Spaces is code that is very easy to read, reads like plain English, and this makes it easier to code, to debug, and to

ramp up newbies.

You can say that a wrapping Sub-Proc abstracts the wrapped Sub-Proc.

Abstraction

The following short story will help explain what is meant by abstraction, in the context of calling Sub-Procs and called Sub-Procs.

The CEO of a large company asks the CFO to close out the financial quarter on Wednesday and report revenue to the stock exchange.

The CEO does not know how the quarter close is done, nor what tables are to be affected, nor which staff of the CFO will do what part of the close.

All the CEO knows is that the financial quarter must closed on Wednesday and revenue reported to the stock exchange.

Now the CFO knows how to close the quarter, and he knows which staff that will do it, but CFO might not know the names of the tables effected,

but CFO’s staff do. It’s not the job of the staff of the CFO to decide what day the quarter gets closed. Its someone else’s concern, namely the

CEO’s.

The staff of the CFO who close out the quarter do it with computer programs. They evoke these programs, but they do not know how the internals

of those programs work, how the code looks, and how many sub-procedures are involved.

Role Request Responsible The Workflow The Abstraction

==========================================================================================================================================================

CEO Close out the Quarter CFO CFO The CEO orders what is to be done; close The how to close out a quarter and report

& Report to Stock out the quarter and report to the stock revenue to the stock exchange is not

exchange exchange. known to the CEO.

For the CEO, these tasks are abstract.

CFO Close out the Quarter CFO Staffers The CFO is told what is to be done; close The CFO does not make the decision of

& Report to Stock out the quarter and report to the stock when a quarter is closed and revenu

Exchange. exchange reported to the stock exchange.

CFO orders it's staff to close out the quarter The how to close out a quarter and report

and report to the stock exchange. revenu to the stock excahnge is not

known to the CFO because these tasks are

abstracted to the CFO.

CFO

Staffers Request to close out Computer Program The CFO staff uses a computer GUI program. The CFO staffers do not know how the

quarter made to a GUI computer GUI program closes out a quarter

computer program. and reports to the stock exchange. The

instructions executed by the computer GUI

program are abstracted to them.

Computer

Program Calls several services Several Services Computer GUI accepts input parameters from The computer GUI program does not know

which close out the the CFO staff and then calls several how the called services close out the

quarter and report to internal services that perform the tasks. quarter no how revenue is reported to the

the stock exchange. stock exchange, as these tasks are abstracted.

In summary, a person or thing can make a request, and this request is called the what, like in what needs to be done. The requestor does not need

to know the how, as in how the request is to be done. For the requester, the how is an abstraction.

The concept of abstraction goes hand in hand with the concept of Separation of Concerns (Soc).

Abstraction and SoC address roles, what each role is responsible for, what each role needs to know, how each role carries out its tasks, and what

each role does not need to know.

If we write software that is mindful of abstraction and SoC, that software will be very easy to understand, simple, easy to learn, easy to support,

and easy to test and upgrade. Such software will be quicker to develop and requiring less developers. Projects well be completed faster,

cheaper, and of higher quality.

Stateless Services

A service is said to be stateless if it does not remember the state of its variables from the last time it was called. The state of a variable is simply its

value. On the opposite, a stateful service remembers the state of its variables from the last call, so values from one call can corrupt values of

another call of that service.

Stateless services are less likely to have bugs, and each call to them starts its variables with a clean slate because every call to a stateless service

causes all its variables to be automatically initiated to blanks or zeros (*LoVal). This means there is no chance of residual values of a prior call

messing with the current call.

We want all our services to be stateless. And in fact, the only part of a stateless service which holds state are its contract (calling parameter values).

In a service, Global variables are stateful and Local variables are stateless. Services must almost never use global resources.

The Contract Holds State

In this way, the only way two services communicate with each other is through their calling parameters, also known as their contracts.

Services are Agnostic

A service is said to be agnostic if it does not care about the specifics of its callers. That word specifics is a pretty broad word!

In other words, a called service is happy to execute as designed, regardless of these specifics:

- The language of the callers

- The OS of the callers

- The context of the callers

- The application/domain of the callers

- The platform of the callers

- The server of the callers (local or remote)

- The domain of the caller

Now if a service can be agnostic to each item on this list, then it’s pretty darn agnostic!

However, in the real world, the degree of agnosticism of a service often does not cover every item in this list. And situation-depending, it may not

need to.

As an example, consider a service that simply returns a Customer Address called returnCustomerAddress(). This service does one simple thing; it

returns a Customer’s address for a customer number specified in its contract. It is said to be agnostic to the caller because it does not care who the

caller is, it does not care what the caller does with the returned Customer Address, and it does not care who calls it, why it is called, however the

caller must be a HLL program for IBM i.

If returnCustomerAddress() is wrapped as a web service, then the degree of agnosticism is greatly expanded.

Separation of Concerns

In our example above, there is a Separation of Concern (SoC) between callers of returnCustomerAddress() and the service

returnCustomerAddress(). If there is wiring between the caller and the service returnCustomerAddress() outside of the contract, then there is a

violation of loose coupling, and SoC and we must not introduce such violations in our code.

The caller of returnCustomerAddress() is not concerned about how the Customer Address will be determined or where it is found. And the service

returnCustomerAddress() is not concerned with how the caller will use the Customer Address.

You might say that each service must mind their own business!

A service must only know what it needs to know and return to callers only what it needs to return, no more, no less.

Single Purpose Services

Monolithic

The traditional way software was written was in monolithic approaches. Solutions coded monolithically were made up of a set of fewer programs

being very large in the number of statements and such programs each having multi-tasks, multi-purposes. In this way programs did everything and

did little or no delegation of tasks to other programs. Such programs often contained logic that was not shareable, and other programs requiring

similar functionality would simply code that functionality again and again.

Monolithic styles of programming were exceedingly more likely to have bugs, be hard to learn, be hard to support, modify, and test. Projects

coded in monolithic ways took longer to complete, took more developers to complete, and the quality of the finished solution was often very low.

In such a situation, the architecture is said to be single-tiered, tightly-coupled, stateful…in other words a real mess.

A False sense of Modularity

Practitioners of the monolithic approach might disagree, as they would point to the many subroutines their huge programs call. However, a huge

program having a lot of subroutines does not turn a monolithic program into a modular one.

The Monolithic Style has Cost Enterprises $ Millions

Programs written 10, 20, 30+ years ago (and even today) were too often written in the monolithic style. Because of the problems and challenges

inherent in the monolithic style, the cost of such programs for its entire lifetime is massive, and if you add up these massive costs across hundreds,

thousands of such programs, the cost is staggeringly higher over the cost if such solutions were written in modern ways.

But the costs are not just in dollars. The cost is also in time and resources which require managing. The time it takes to complete and implement

solutions in the monolithic style is exceedingly longer than if modern ways were used.

Paints into a Corner

Solutions coded in the monolithic tradition often traps the enterprise into a corner of rigidity so that changes to business logic, processing changes

are nearly impossible, or very difficult to do. There is no turning on a dime.

The fib: Monolithic was the only Way in the Past

This claim is often made to excuse the massive technical debt most RPG shops suffer from.

The truth is a cold hard fact: At the time the old legacy software was written 10, 20, 30, 40 years ago, many modern methods were known and

used in the IT world, but these modern ways were ignored by RPG programmers back then. I can write this because I was one of them.

The use of monolithic ways in current times has not only cost enterprises $ millions, but it has also cost RPG shops their reputations because of

their inability to often create world-class high-quality software quickly with low bug counts. This has caused many enterprises to lose faith in their

RPG practitioners and this is one big reason many RPG shops will or have moved away from the IBM i.

The Paradox

Here is the ugly paradox: The IBM i is the most advanced, most capable, reliable, and most modern OS in the history of IT and it runs on the most

advanced, fastest, most commercial-grade and most capable server known as Power Systems. Yet, IBM i has the unearned reputation for being old,

legacy, backward, obsolete and living in the old Dark Green Ages.

Self-Contained Services

Define the Contract

The contract refers to the calling parameters defined for each service. The required calling parameters must be sufficient for what the service

needs and what the service will provide, no more, and no less. Do not provide parameters that are not required.

Because all services are stateless, state only exists in contracts between services and callers.

The calling parameters which make up the contract have the following attributes:

1. Each parameter must be justified

2. Names of each parameter must be provided an accurate namespace

3. Parameters must almost never be data-structures (passing entire rows, or a wholesale bunch of subfields in a data-structure)

4. Parameters must nearly always be atomic (see bullet #3)

5. An exception to bullets #3 & #4: result-set arrays can be passed between caller and callee with a pointer for pointer-based DS’s which

allows sharing of resources between caller and callee.

6. Parameters must never mirror table structures (we want to maintain decoupling between data and programs)

7. Never use input-output parameters; use separate input and output parameters.

8. All input parameters must have prefix of in and all output have prefix of out; which removes ambiguity.

9. For RPG, all input parameters must be coded with Const or Value.

10. Nearly all attributes for parameters must not be hardcode, instead use Like.

Don’t define Global Resources

In modular styles of coding, each service must be a self-contained unit. This means that each service, sub-proc, function, and method stands on its

own and the only ways to communicate with or between services is via their contract, which is their calling parameters. Addition wiring, and

tentacles outside of the contract are forbidden, as this will make the solution vulnerable to bugs.

Global resources tend to be stateful, but we need to pivot toward stateless resources (local).

Even two services within the same service program must not share resources, such as the global part of a service module. These global resources

are “F” and “D” spec statements between the Control type statements at the very top and the first PI (program interface) statement for the first

sub-proc.

In other words, the global part of a module should be empty of resources which all contained sub-procs could share. But in the real world, this

cannot always be the case, but it is something we must strive for.

The Bad Kind of Sharing

Modular coding is all about sharing resources, reusing code, and writing once, calling from everywhere. However, there is a type of sharing of

resources which must be avoided as much as possible, and that type is the sharing of Global resources within a service program

Sometimes Global Resources are Indicated

For example, defined *DtaAra’s must be placed in the global area of a service module.

Sometimes the global part of a service module is the best place to define CLOBs because too many big CLOBs could cause execution time crashes

because the single level addressing to RAM places a limit of just 16mb for resources allocated by each job.

Another type of global statement that should often be allowed are those which perform SQL compile declarations.

Exceptions to the “No Global Resources” Rule:

1. Defining *DtaAra’s (otherwise program will not compile)

2. Defining large CLOBs (number of and size of CLOBs depending)

3. SQL Compile Declarations

4. Prototypes for Exposed Sub-Procs (found in copybook source member but also Global)

Self-Documenting

Delegation

One of the most valuable attributes to modular coding is delegation. The programs high in the MTA tiers do the most delegating of tasks, and as

one goes down to the lower tiers, delegation is less and less. And this ties into what was said earlier, and that is that at the highest tiers the code is

very abstract and as one slides down to lower tiers the code becomes more and more concrete. It’s in these lower concrete programs that the

actual logic for performing the required tasks is done.

So, looking at the code in the higher tiers, one sees that they do no heavy lifting, no SQL I/O, no logic that performs the detailed tasks required.

Instead, one sees a bunch of service calls. It seems that all high placed programs have the same pattern; they call service, call service, call service,

call service, then end. And in fact, these high placed callers do not even know how the lower concrete programs work, nor what they do, nor the

structure of database, nor table names, nor view names. For these high placed programs, the services they call abstract the functionality to them.

Because modular code delegates, it is very readable. If these high placed programs are written correctly, their code reads like plain English, and

laypersons can even read and understand much of that code. In this way, the code is self-documenting, especially if called services are named to a

namespace. This is not to say program comments are not needed, but rather that not many comments are required to convey what the program

does.

Need to Know Basis

Very often, when one is looking at program code, and this person could be a developer, a manager, a layperson, they want to get a good feel for

what it does without getting overwhelmed and lost by the details, the minutia of complex logic, the concrete tasks. So it could be enough for one’s

understanding to read the statement returnCustomerOutstandingBalance() without having to deep dive into the complex SQL code that calculates

the returned value.

Very often, the one reading program code does not need to know, nor care about the detailed logic of called services. On the other hand, if one

needs to deep dive the logic of a service, they always have that option to do so.

In other words, what would you rather debug? A program that does too much and is massive, or a very small program that calls a bunch of

services?

Know Where to Place Your Logic

If you need to add code to fix a bug, or to add functionality, it’s not enough to add correct logic. You must also put that code in the right place. You

might argue that placing that SQL SELECT INTO in a top tier program works, and your unit testing could prove this out, but is that code readable? Is

it better that that functionality or fix be delegated to a lower tier service? Is the share-value of that logic lost?

Easy to Read Code

See the section above called Self-Documenting.

If it takes one longer than 5 minutes to get the jest of a program, to get a feel for what it is attempting to do, then it might not have been written

modularly, nor delegated to lower placed services enough.

Easy to Support Code

Here is how you determine if a solution is easy or hard to support: If it takes a newly hired decent programmer more than 30 minutes to figure out

how an entire solution works, then that solution was probably not coded properly. Now one could argue that all the old-timers have no problem

figuring that code out, but of course they can because they have been there for years.

Only Newbies Decide if Solution Coded Well

So, the best metric of determining if a solution was coded well is to see how long it takes newbies to figure it now; not the time it takes the old-

timers.

Code that is easy to support have the following attributes:

1. Modular

2. Heavy use of Delegation

3. All components and logic are placed in their proper tier of the MTA

4. Services are stateless, loosely coupled, each performing 1 thing

5. Services coded to an accurate Namespace.

Build Upon a Growing Catalog of Services

Overtime, as the catalog of services (microservices) grows within a shop, projects take less and less time to complete, and with fewer and fewer

developers, and less bugs. Over time, a shop that leverages a huge catalog of services can do a lot more projects in less time and costing less

money, requiring less developers, and quality gets better and better.

There are many types of services (APIs) which should be found in a Catalog of Services:

1. RPG/C/COBOL Sub-Procs, Functions and Methods

2. Web Services (provisioned and consumed)

3. Native DB2 SQL Stored Procedures

4. Native DB2 SQL User Defined Functions (UDFs and UDTFs)

We all need to stop reinventing the wheel over and over again!

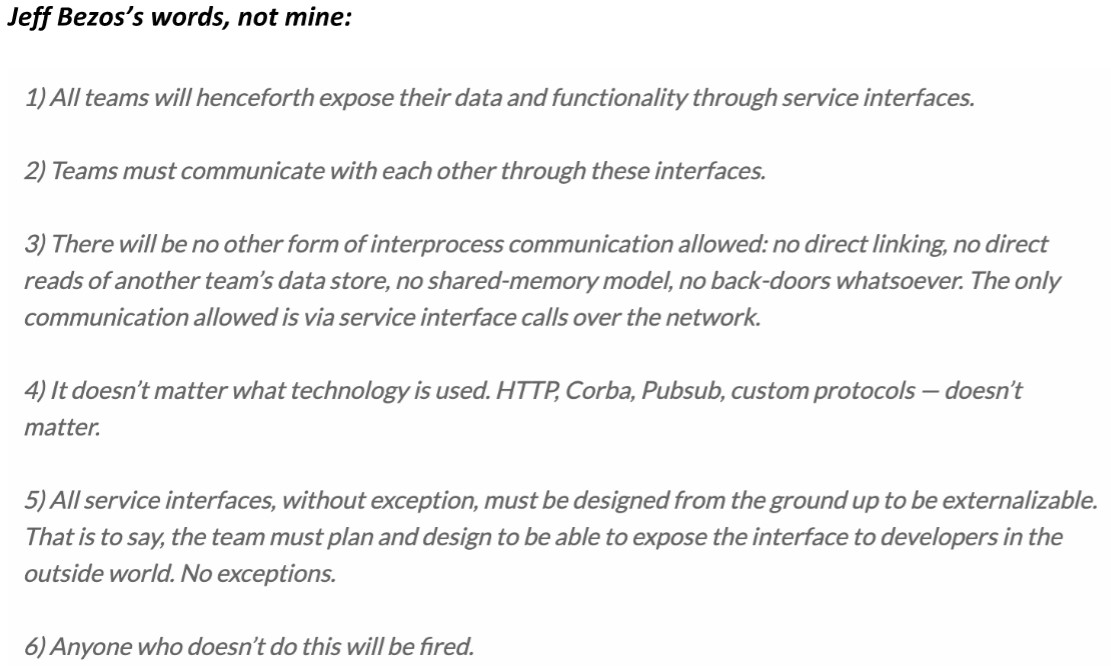

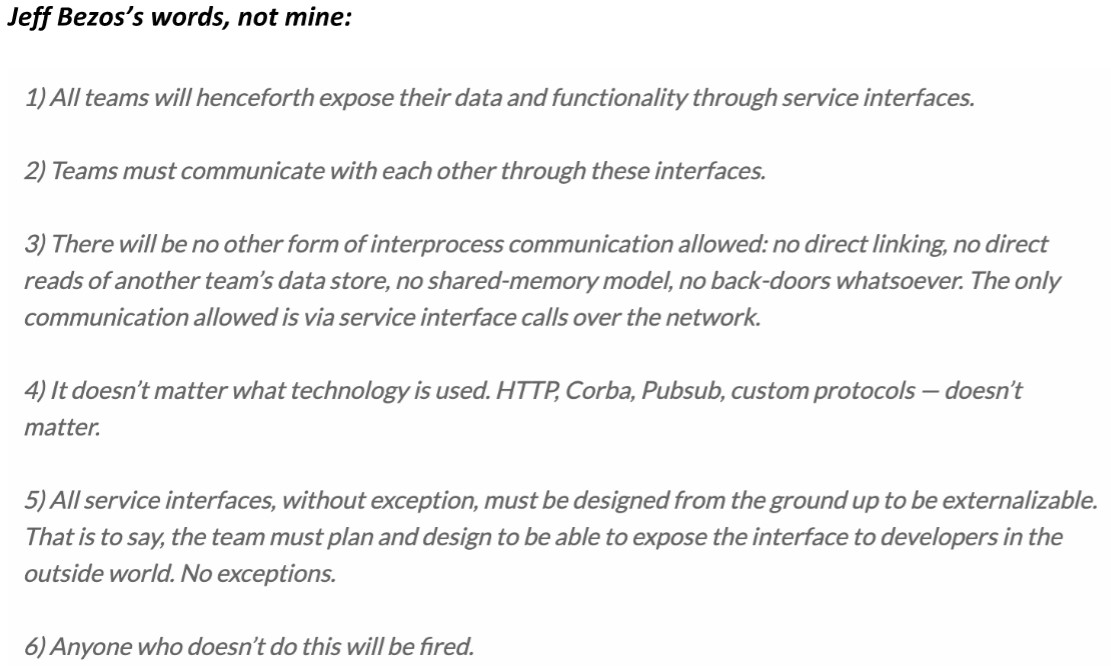

What does Jeff Bezos (richest man in the world and founder of Amazon) have to say about APIs?

Taking Advantage of All Server Processors

Our modern IBM i on Power provides multiple CPUs to get the workload done as soon as possible. Sadly, the way solutions have been architected

traditionally for IBM midrange computers has not often enough taken advantage of those multiple processors, nor the ability to do more tasks

concurrently.

Stop Submitting Batch Jobs

For example, jobs are submitted to a batch *JobQ too often. This design pattern sounds simple and effective enough but it’s the worse way to get

units of work done because it serializes the workload instead of multiplexing that work so that it is done concurrently. The problem with submitted

jobs is that each job has to open up the tables, stand up the service programs, and build the cache from scratch. These things take a lot of time and

resources, and if a solution is submitted thousands of times each day, then the system has to perform that over head over and over again

thousands of times.

There are use-cases for submitting jobs, but that list is very short. Consider submitting a batch job once which listens for events, and wakes up

periodically to do processing. Such jobs run 24/7.

Less Synchronous Processing, More Asynchronous

Asynchronous processing is all about loosely coupled components which make up a solution. In other words, keep all components running at the

same time. Do not use serialized logic. Keep the processors working, minimize idle time.

Stop Closing Tables & Cursors

Opening and closing cursors, tables, views, building data caches, and instantiating service programs are expensive and time-consuming tasks. It

would be far better to open once, perform soft closes, initialize the cache once, and instantiate services once, because doing this makes for much

faster execution times, and leverages a cache of database rows which can make the app run faster and faster.

Design Asynchronous Solutions

Instead of using the old traditional tired design pattern of submitting jobs, it would be far better for a program to write a request into a *DtaQ

which is listened to and acted upon by an always running job in the background (a batch job submitted once and runs 24/7). This background job is

loosely coupled to the job that places the request entry in the *DtaQ. This design pattern offers loosely coupled solution, a Separation of Concerns,

and asynchronous processing which would take advantage of concurrency and the multiple processors of the server.

In other words, you want to architect solutions which have:

1. Most Components moving concurrently

2. All Components of solution loosely coupled

3. Virtually no Serialized processing

4. All Moving Components Respect a Separation of Concerns (Soc)

5. One Moving Component can be paused/stopped without effecting the others.

6. The solution is built from the ground up to be scaled.

Build a Bunch of Service Requesters and Service Providers

Apply architectures that provide for programs that make requests, and others that provide services, such that the requests are placed in persistent

*DtaQ’s which are listened to by the Service Providers. There can be exceptions to this direction, but they are rare and infrequent.

When to Use Synchronous Design Patterns

Often GUIs and other UIs require results Realtime (aka NOW). For these requirements, synchronous services must be called. Such services are

Web Services, and services called by UIs with some exceptions.

Keep a bunch of “plates” spinning at the same time...go ahead, the IBM i can handle it!

Taking Advantage of All Server Processors

Our modern IBM i on Power provides multiple CPUs to get the workload done as soon as possible. Sadly, the way solutions have been architected

traditionally for IBM midrange computers has not often enough taken advantage of those multiple processors, nor the ability to do more tasks

concurrently.

Stop Submitting Batch Jobs

For example, jobs are submitted to a batch *JobQ too often. This design pattern sounds simple and effective enough but it’s the worse way to get

units of work done because it serializes the workload instead of multiplexing that work so that it is done concurrently. The problem with submitted

jobs is that each job has to open up the tables, stand up the service programs, and build the cache from scratch. These things take a lot of time and

resources, and if a solution is submitted thousands of times each day, then the system has to perform that over head over and over again

thousands of times.

There are use-cases for submitting jobs, but that list is very short. Consider submitting a batch job once which listens for events, and wakes up

periodically to do processing. Such jobs run 24/7.

Less Synchronous Processing, More Asynchronous

Asynchronous processing is all about loosely coupled components which make up a solution. In other words, keep all components running at the

same time. Do not use serialized logic. Keep the processors working, minimize idle time.

Stop Closing Tables & Cursors

Opening and closing cursors, tables, views, building data caches, and instantiating service programs are expensive and time-consuming tasks. It

would be far better to open once, perform soft closes, initialize the cache once, and instantiate services once, because doing this makes for much

faster execution times, and leverages a cache of database rows which can make the app run faster and faster.

Design Asynchronous Solutions

Instead of using the old traditional tired design pattern of submitting jobs, it would be far better for a program to write a request into a *DtaQ

which is listened to and acted upon by an always running job in the background (a batch job submitted once and runs 24/7). This background job is

loosely coupled to the job that places the request entry in the *DtaQ. This design pattern offers loosely coupled solution, a Separation of Concerns,

and asynchronous processing which would take advantage of concurrency and the multiple processors of the server.

In other words, you want to architect solutions which have:

1. Most Components moving concurrently

2. All Components of solution loosely coupled

3. Virtually no Serialized processing

4. All Moving Components Respect a Separation of Concerns (Soc)

5. One Moving Component can be paused/stopped without effecting the others.

6. The solution is built from the ground up to be scaled.

Build a Bunch of Service Requesters and Service Providers

Apply architectures that provide for programs that make requests, and others that provide services, such that the requests are placed in persistent

*DtaQ’s which are listened to by the Service Providers. There can be exceptions to this direction, but they are rare and infrequent.

When to Use Synchronous Design Patterns

Often GUIs and other UIs require results Realtime (aka NOW). For these requirements, synchronous services must be called. Such services are

Web Services, and services called by UIs with some exceptions.

Keep a bunch of “plates” spinning at the same time...go ahead, the IBM i can handle it!

Takes Advantage of Concurrency

Solutions which take advantage of concurrency run the fastest, are easiest to support, debug, and understand.

The best way to explain what is meant by Concurrent solutions for IBM i is to talk about one currently running in production:

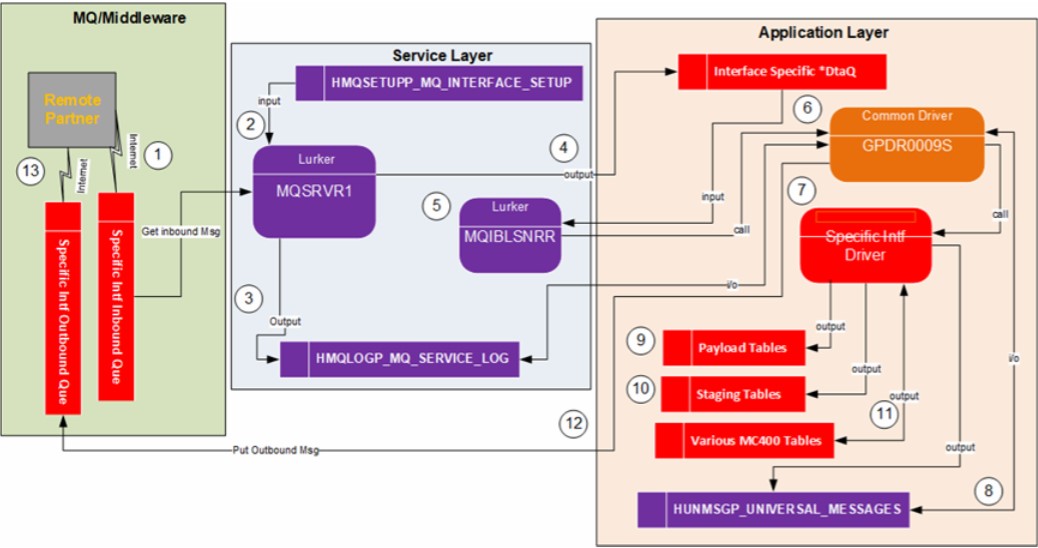

Takes Advantage of Concurrency

Solutions which take advantage of concurrency run the fastest, are easiest to support, debug, and understand.

The best way to explain what is meant by Concurrent solutions for IBM i is to talk about one currently running in production:

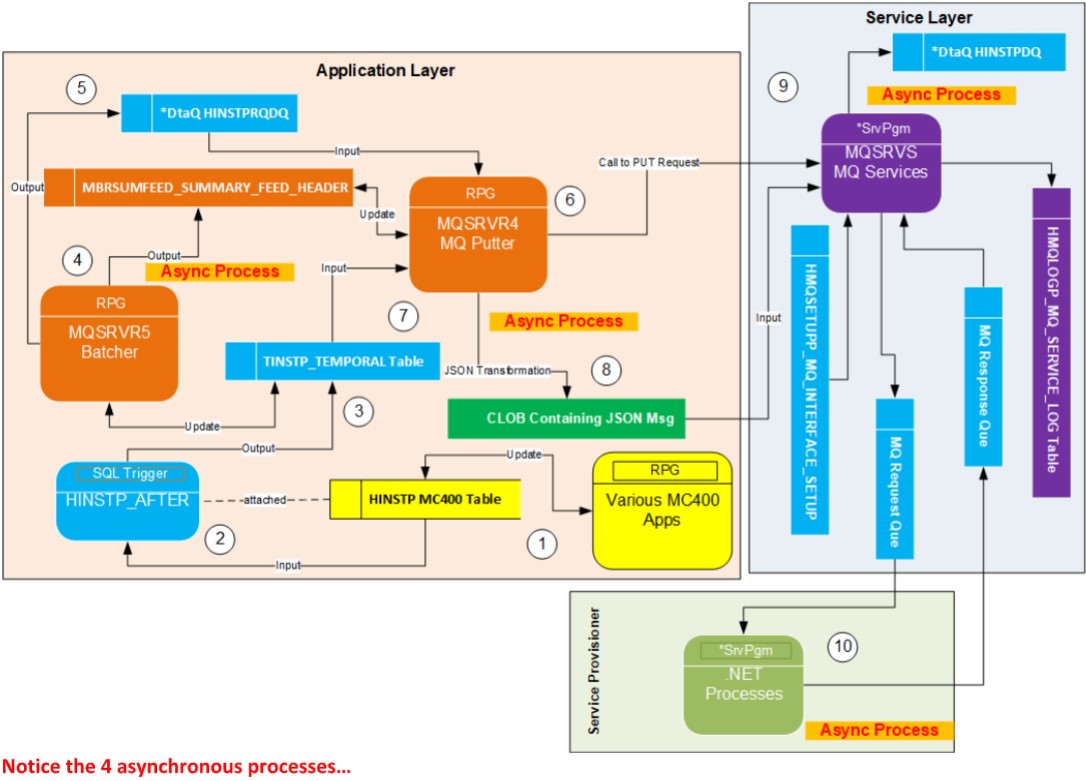

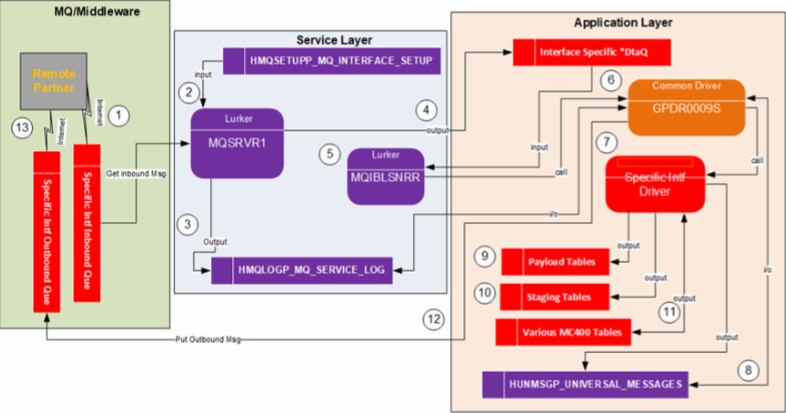

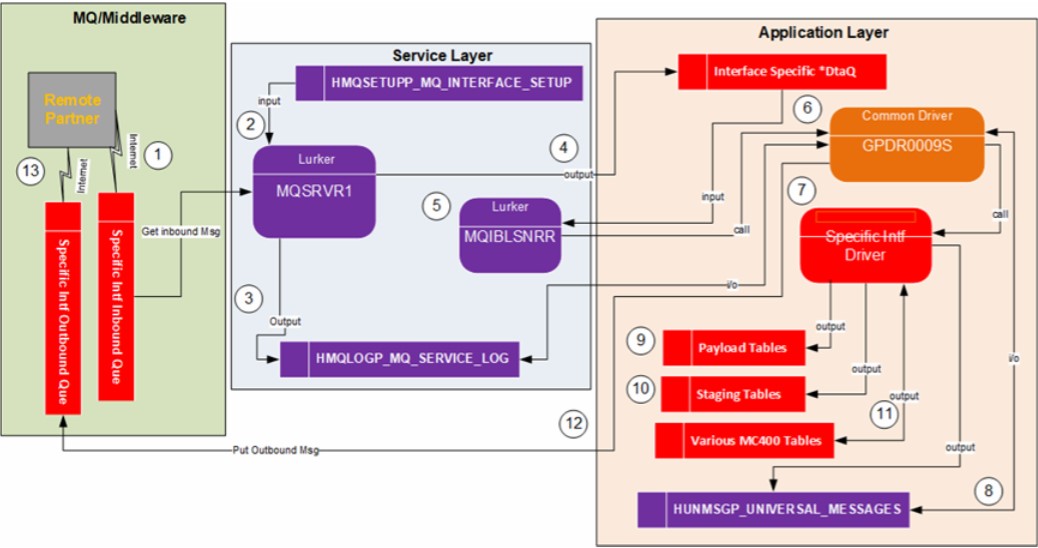

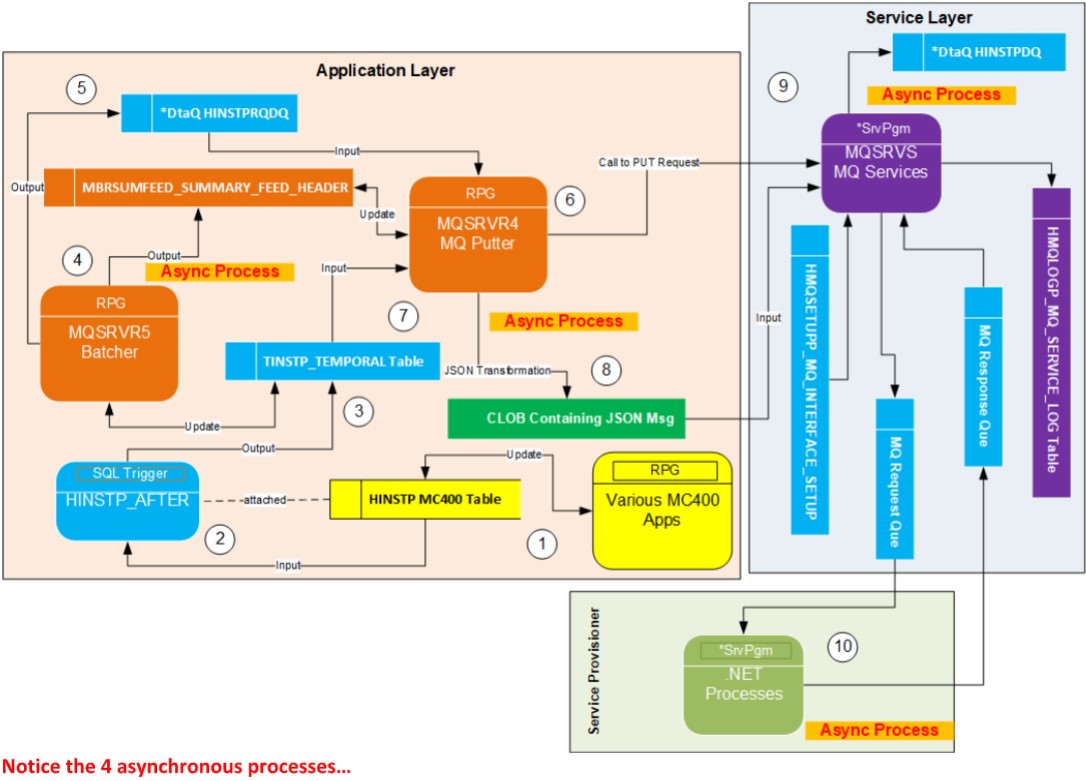

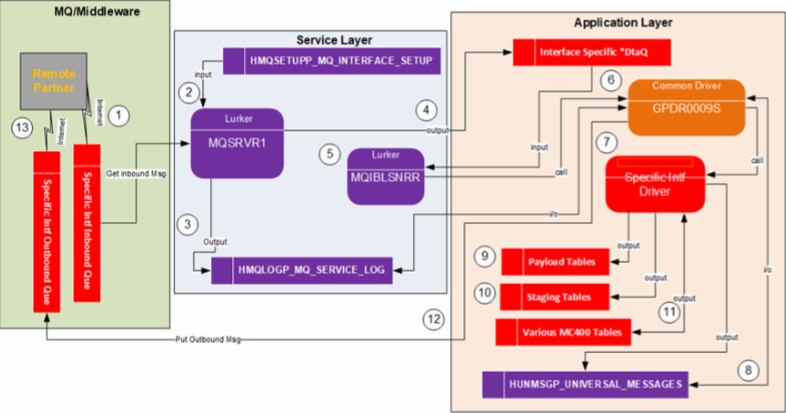

In the DFD (process model) above, this shows the solution for an MQ interface currently running in production. Here is a list of the major moving

parts which are loosely coupled and run concurrently:

1. Remote Partner PUTs Request onto MQ Que

2. Local Listener (Lurker) Listens to MQ Que for Requests

3. Local Listener GETs Requests and place it in Log and *DtaQ

4. Application Listener of *DtaQ processes Request and Returns Response.

Features which make for a very Fast executing Solution:

1. Of the 4 major concurrently running parts, any of them can be paused/stopped without preventing execution of the others.

2. Very little serialized Processing done.

3. The two Listeners (service tier & application tier) are submitted once and run forever in the background.

4. The service programs that support the listeners are instantiated only once.

5. The tables/views used by the listeners are opened once (in some cases soft closes are performed).

6. Data cache instantiated only once.

7. All services, modules, and callers which make up this solution are stateless, loosely coupled, enforced SoC, and a lot of service sharing is

leveraged.

8. Solution is made up of 100s of microservices making support, understanding, maintenance and testing easy.

9. The 100,000+ lines of code across hundreds of services which support this solution are very easy to support, learn, test, debug, and upgrade

with new requirements.